Understanding the complexities of the dataset would enable the model to work better in unusual circumstances. The performance of the model can be affected by the dataset as the model doesn’t learn much from the simpler dataset. Many evaluation methods like Dice Similarity Coefficient or DSC can be used to measure or evaluate the performance of the model according to its predictions

Quick Outline

This guide explains the following sections:

- What is the Confusion Matrix?

- What is Dice Loss?

- How to Calculate Dice Loss in PyTorch

- Prerequisites

- Example 1: Using Dice() Method

- Example 2: Using Functional Dice

- Example 3: Plotting Dice Loss in Single Value

- Example 4: Plotting Dice Loss in Multiple Values

- Conclusion

What is the Confusion Matrix?

Before getting into the Dice loss and its implementation, we need to learn the process of how the evaluation method usually works. A confusion matrix is used to evaluate the performance of the model as it contains all the correct and incorrect predictions done by the model. By looking at the matrix, the evaluator gets an idea about the performance, but to understand it deeply, we study the values thoroughly.

What is Dice Loss?

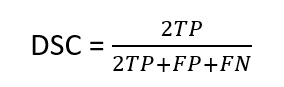

The Dice Similarity Coefficient is the process of evaluating two datasets to check the similarities and differences between them. In Machine Learning, it is used to evaluate the performance of the model by using the formula mentioned below:

The above formula is used to find the similarity between two tensors in PyTorch or datasets in general. To apply the formula, multiply 2 by the total number of true positive values placed in the confusion matrix and divide them by the addition of 2 times of true positive, false positive, and false negative.

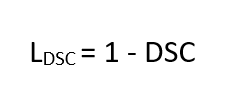

Now, we can extract the dice loss from the DSC as it is similar so the loss will be subtracted from 1 as mentioned below:

How to Calculate Dice Loss in PyTorch

To calculate the Dice loss in PyTorch, go through the following sections that contain multiple examples of the implementation:

Note: The Python code for the examples can be accessed from here:

Prerequisites

Before heading towards the example, simply complete the following requirements to smoothly implement the examples:

- Access Python Notebook

- Install Modules

- Import Libraries

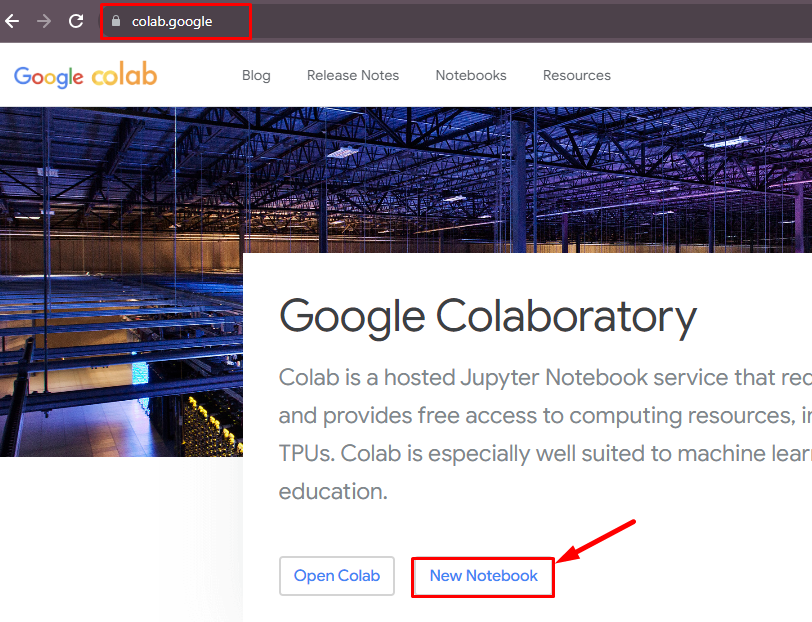

Access Python Notebook

Open the Python notebook by clicking on the “New Notebook” button from the Google Colaboratory page. The user can also use other notebooks like Jupyter to write the code in Python language:

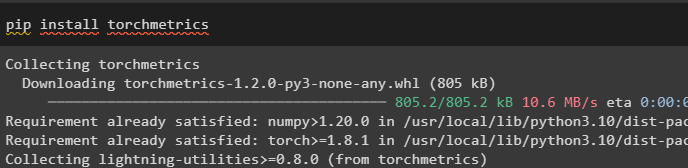

Install Modules

Install the “torchmetrics” framework that contains more than 100 PyTorch metrics implementations using the pip command:

pip install torchmetrics

Import Libraries

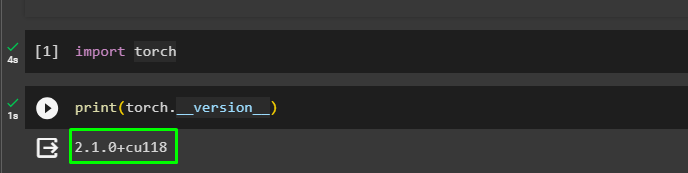

After the successful installation of the module, import the torch library to use its functions to calculate the dice loss in PyTorch:

import torchOnce the torch is installed, simply verify the process by returning its version:

print(torch.__version__)

Example 1: Using Dice() Method

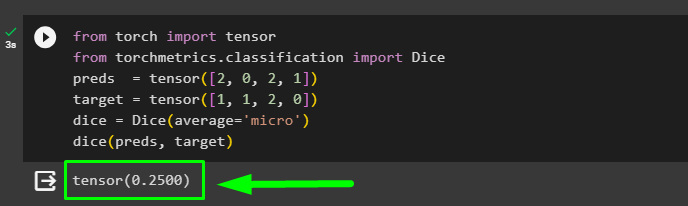

Import the tensor and Dice libraries to apply the Dice() method on the tensors containing the datasets. Create a tensor containing predicted values stored in the preds variable and target variable to store the actual values of the dataset. Apply the Dice() method with the argument to provide the dice score which can be macro, micro, etc. before printing the dice loss based on the preds and target tensors:

from torch import tensor

from torchmetrics.classification import Dice

preds = tensor([2, 0, 2, 1])

target = tensor([1, 1, 2, 0])

dice = Dice(average='micro')

dice(preds, target)The following screenshot displays the dice loss calculated using the Dice() method:

Example 2: Using Functional Dice

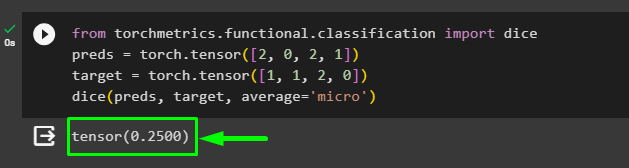

The torchmetrics provides functional dependency to get the dice library for using all the arguments while calling the method to get the dice loss of the datasets:

from torchmetrics.functional.classification import dice

preds = torch.tensor([2, 0, 2, 1])

target = torch.tensor([1, 1, 2, 0])

dice(preds, target, average='micro')

Example 3: Plotting Dice Loss in Single Value

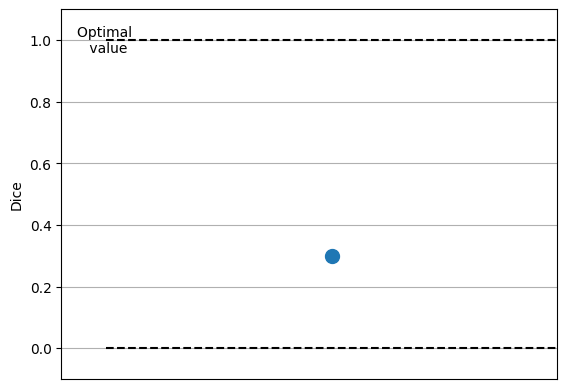

Import the randint library from the torch module that can be used to get the random integers between the provided upper and lower limit. After that, call the Dice() method to get the loss value plotted on the graph using the metric.plot() method in the form of a tuple. The fig_, ax_ unpacks the tuple into variables like fig containing the figure and ax with the axis of the graph:

from torch import randint

metric = Dice()

metric.update(randint(2,(10,)), randint(2,(10,)))

fig_, ax_ = metric.plot()

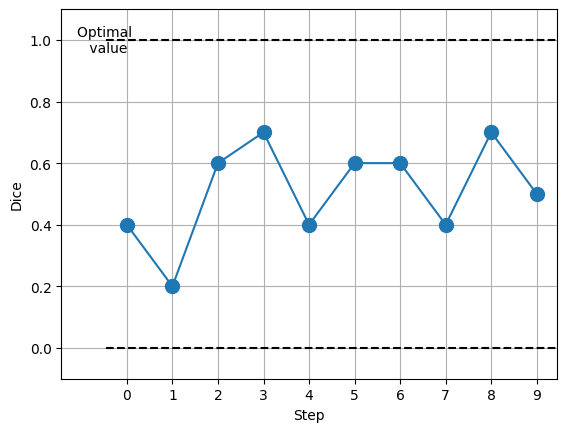

Example 4: Plotting Dice Loss in Multiple Values

The following example uses the loop to get multiple values between the upper and lower range provided in the randint() method. The metric.plot() returns the graph with multiple values at different stages of the process:

metric = Dice()

values = [ ]

for _ in range(10):

values.append(metric(randint(2,(10,)), randint(2,(10,))))

fig_, ax_ = metric.plot(values)

That’s all about the Dice Similarity Coefficient and its loss with the implementation.

Conclusion

To sum up, calculating the dice loss required an understanding of the confusion matrix and its values to evaluate the performance of the model. Using the confusion matrix, the user can evaluate the Dice similarity coefficient, and from that the loss can be calculated easily.

In Pytorch, the torchmetrics library provides the Dice() method to calculate the dice loss between the target and prediction datasets. The functional dependency provides the dice() method to get the loss value as well. This guide has explained all the topics in order to calculate the dice loss in PyTorch with the help of examples.