Finding loss using statistical formulas can be vital and considered an evaluation technique that compares the input values with the predicted values. The loss is evaluated for the predicted values by checking how much it differs from the actual input provided during the training. Connectionist Temporal Classification or CTC Loss maps the probabilities of the predicted tensors on the input probabilities.

Quick Outline

This guide explains the following sections:

- What is CTC Loss

- How to Calculate CTC Loss in PyTorch

- Prerequisites

- Example 1: Calculate Loss Using CTCLoss() Method

- Example 2: Calculate CTC Loss With Padded Target

- Example 3: Calculate CTC Loss With Un-Padded Target

- Example 4: Calculate CTC Loss With Un-Padded and Un-Batched Target

- Example 5: Calculate CTC Loss Using Functional Dependency

- Conclusion

What is CTC Loss

The Connectionist Temporal Classification (CTC) loss function can be used when we need to find an alignment between multiple sequences which is a challenging task. One of the major applications of CTC loss will be aligning each character and placing it in its correct location with the soundtrack. It simply produces a loss value to find the difference of each node by summing probabilities of alignments in the input and the target sequences.

How to Calculate CTC Loss in PyTorch

PyTorch offers multiple functions like CTCLoss() and functional.ctc_loss() methods to get the loss value from input and target values. To calculate the CTC loss in PyTorch, simply go through the sections as follows:

Note: The Python code to calculate the CTC loss is available here

Prerequisites

Complete the following steps before starting to calculate the CTC loss using the PyTorch environment:

- Access Python Notebook

- Install Modules

- Import Libraries

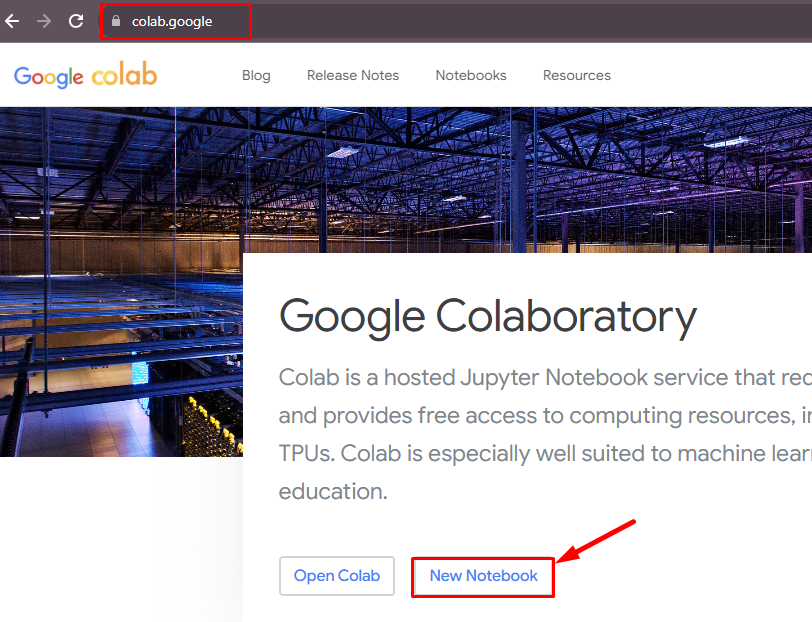

Access Python Notebook

A notebook is required to execute the Python code which can be accessed from the official website:

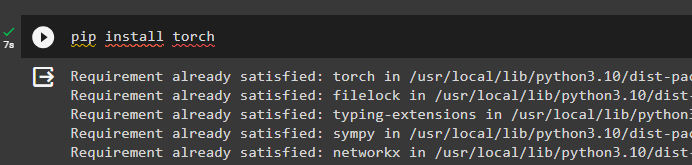

Install Modules

Start the process by installing the torch framework from the pip package manager using the following code:

pip install torch

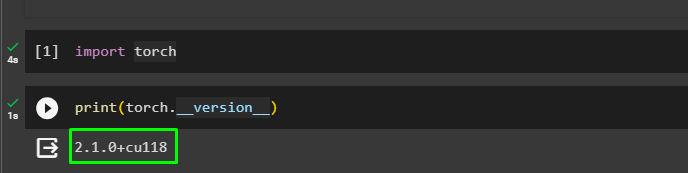

Import Libraries

After that, import the torch library to make the environment ready for using the methods offered by the torch library:

import torchCall the print() method with the torch.__version__ to print the installed version of the torch:

print(torch.__version__)

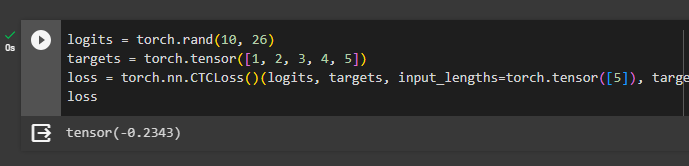

Example 1: Calculate Loss Using CTCLoss() Method

The first example simply shows the use of the CTCLoss() method in PyTorch as mentioned in the code below. Create two tensors one for the input values and the other for the target values to apply the CTCLoss() method to them. Store the value generated by the CTCLoss() method in the loss variable and call it to print the value on the screen:

logits = torch.rand(10, 26)

targets = torch.tensor([1, 2, 3, 4, 5])

loss = torch.nn.CTCLoss()(logits, targets, input_lengths=torch.tensor([5]), target_lengths=torch.tensor([5]))

loss

Example 2: Calculate CTC Loss With Padded Target

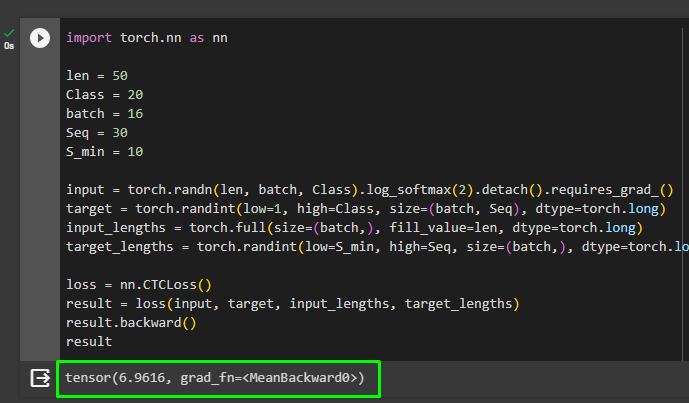

The next example takes the target tensor after the application of padding to its values making the same sequence as the input values. Create a variable containing the values of the length of sequence for input and target, number of classes, batch_size, and length of the target. Build the tensors using the variable and softmax() method to store input and target values. Apply the CTCLoss() method with the input, target, and their lengths before printing the value of the loss:

import torch.nn as nn

len = 50

Class = 20

batch = 16

Seq = 30

S_min = 10

input = torch.randn(len, batch, Class).log_softmax(2).detach().requires_grad_()

target = torch.randint(low=1, high=Class, size=(batch, Seq), dtype=torch.long)

input_lengths = torch.full(size=(batch,), fill_value=len, dtype=torch.long)

target_lengths = torch.randint(low=S_min, high=Seq, size=(batch,), dtype=torch.long)

loss = nn.CTCLoss()

result = loss(input, target, input_lengths, target_lengths)

result.backward()

result

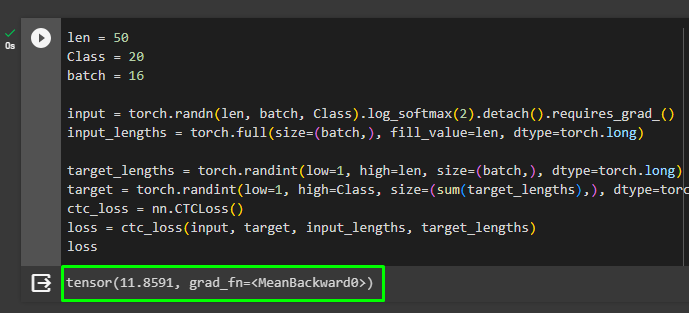

Example 3: Calculate CTC Loss With Un-Padded Target

This example removes the padding from the target tensor by removing the sequence from the size argument. It will generally create a bigger loss value as the model has to find the sequence on its own which might not be as accurate as the previous example. The loss value generated through this code is 11.8591 which is a lot higher than 6.9616:

len = 50

Class = 20

batch = 16

input = torch.randn(len, batch, Class).log_softmax(2).detach().requires_grad_()

input_lengths = torch.full(size=(batch,), fill_value=len, dtype=torch.long)

target_lengths = torch.randint(low=1, high=len, size=(batch,), dtype=torch.long)

target = torch.randint(low=1, high=Class, size=(sum(target_lengths),), dtype=torch.long)

ctc_loss = nn.CTCLoss()

loss = ctc_loss(input, target, input_lengths, target_lengths)

loss

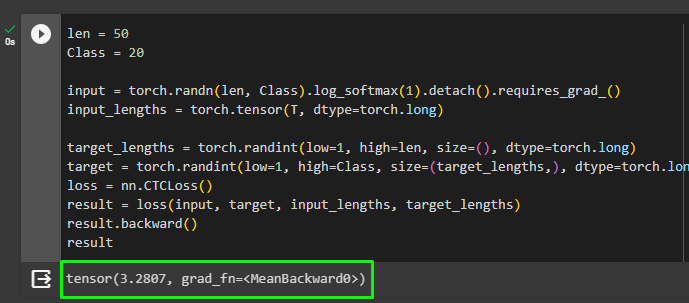

Example 4: Calculate CTC Loss With Un-Padded and Un-Batched Target

This example not only takes the un-padded target but also the un-batched target to make the model predict everything on its own. It’s better for the model as it has to find the underlying patterns from the input and target tensors that can work more efficiently. The loss value evaluated through this example is 3.2807 which is better than both the previous examples:

len = 50

Class = 20

input = torch.randn(len, Class).log_softmax(1).detach().requires_grad_()

input_lengths = torch.tensor(T, dtype=torch.long)

target_lengths = torch.randint(low=1, high=len, size=(), dtype=torch.long)

target = torch.randint(low=1, high=Class, size=(target_lengths,), dtype=torch.long)

loss = nn.CTCLoss()

result = loss(input, target, input_lengths, target_lengths)

result.backward()

result

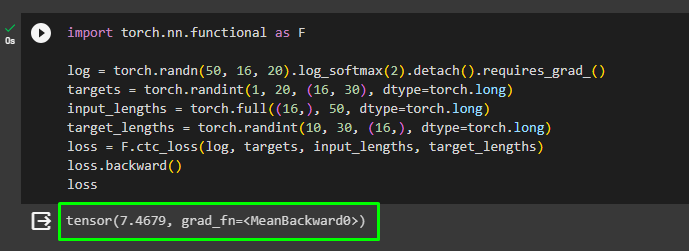

Example 5: Calculate CTC Loss Using Functional Dependency

The next example uses the functional dependency from the torch module to call the ctc_loss() method and evaluate the CTC loss through that. Create the tensors for the input (log), target, and their lengths to use the ctc_loss() function with the functional dependency. Apply the backpropagation to the loss value using the backward() metro and print the loss value on the screen:

import torch.nn.functional as F

log = torch.randn(50, 16, 20).log_softmax(2).detach().requires_grad_()

targets = torch.randint(1, 20, (16, 30), dtype=torch.long)

input_lengths = torch.full((16,), 50, dtype=torch.long)

target_lengths = torch.randint(10, 30, (16,), dtype=torch.long)

loss = F.ctc_loss(log, targets, input_lengths, target_lengths)

loss.backward()

loss

That’s all about how to calculate the CTC loss in PyTorch.

Conclusion

To calculate the Connectionist Temporal Classification loss in PyTorch, install the torch module and import its libraries to get its methods. The torch library offered the use of the CTCLoss() and functional.ctc_loss() methods to calculate the values by mapping the input and target sequences. The CTC can be used with padding arguments to give the sequence of each node of the target tensor so they can be properly mapped on the input sequence. This guide has elaborated on the process of how to calculate the CTC loss in PyTorch.